#Self-drivingCar

Tesla Confesses to California DMV Self-Driving Tech is Overhyped

Back in January, Tesla CEO Elon Musk said he remained confident that his company would be able to deliver a self-driving vehicle exceeding the capabilities of an average human pilot by the end of 2021. But this has become a tired excuse used almost reflexively by automakers for years, making the inevitable shifting of the goalpost so predictable that nobody even bothers to get upset anymore. Being lied to is just part of everyday living and the automotive sector is just one droplet in the overflowing bathtub of mendacity.

Unfortunately, organizations continue making the mistake of expecting to be given the benefit of the doubt as they continue repeating the same fables. We know they’re working on solid-state batteries and autonomous cars, but they’re hitched to these unrealistic expectations and completely fabricated timelines that draw our focus while they engage in slimier practices on the sly. While holding them accountable is often easier said than done, catching them in a lie is usually fairly simple. For example, the California Department of Motor Vehicles accidentally called out Tesla on the full self-driving (FSD) beta it’s been testing with employees.

Are Government Officials Souring On Automotive Autonomy?

Thanks to the incredibly lax and voluntary guidelines outlined by the National Highway Traffic Safety Administration, automakers have had free rein to develop and test autonomous technology as they see fit. Meanwhile, the majority of states have seemed eager to welcome companies to their neck of the woods with a minimum of hassle. But things are beginning to change after a handful of high-profile accidents are forcing public officials to question whether the current approach to self-driving cars is the correct one.

The House of Representatives has already passed the SELF DRIVE Act. But it’s bipartisan companion piece, the AV START Act, has been hung up in the Senate for months now. The intent of the legislation is to remove potential barriers for autonomous development and fast track the implementation of self-driving technology. But a handful of legislators and consumer advocacy groups have claimed AV START doesn’t place a strong enough emphasis on safety and cyber security. Interesting, considering SELF DRIVE appeared to be less hard on manufacturers and passed with overwhelming support.

Of course, it also passed before the one-two punch of vehicular fatalities in California and Arizona from earlier this year. Now some policymakers are admitting they probably don’t understand the technology as they should and are becoming dubious that automakers can deliver on the multitude of promises being made. But the fact remains that some manner of legal framework needs to be established for autonomous vehicles, because it’s currently a bit of a confused free-for-all.

Tesla and NTSB Squabble Over Crash; America Tries to Figure Out How to Market 'Mobility' Responsibly

The National Transportation Safety Board, which is currently investigating last month’s fatal crash involving Tesla’s Autopilot system, has removed the electric automaker from the case after it improperly disclosed details of the investigation.

Since nothing can ever be simple, Tesla Motors claims it left the investigation voluntarily. It also accused the NTSB of violating its own rules and placing an emphasis on getting headlines, rather than promoting safety and allowing the brand to provide information to the public. Tesla said it plans to make an official complaint to Congress on the matter.

The fallout came after the automaker disclosed what the NTSB considered to be investigative information before it was vetted and confirmed by the investigative team. On March 30th, Tesla issued a release stating the driver had received several visual and one audible hands-on warning before the accident. It also outlined items it believed attributed to the brutality of the crash and appeared to attribute blame to the vehicle’s operator. The NTSB claims any release of incomplete information runs the risk of promoting speculation and incorrect assumptions about the probable cause of a crash, doing a “disservice to the investigative process and the traveling public.”

Driving Aids Allow Motorists to Tune Out, NTSB Wants Automakers to Fix It

Driving aids are touted as next-level safety tech, but they’re also a bit of a double-edged sword. While accident avoidance technology can apply the brakes before you’ve even thought of it, mitigate your following distance, and keep your car in the appropriate lane, it also lulls you into a false sense of security.

Numerous members of the our staff have experienced this first hand, including yours truly. The incident usually plays out a few minutes after testing adaptive cruise control or lane assist. Things are progressing smoothly, then someone moves into your lane and the car goes into crisis mode — causing you to ruin your undergarments. You don’t even have to be caught off guard for it to be a jarring experience, and it’s not difficult to imagine an inexperienced, inattentive, or easily panicked driver making the situation much worse.

Lane keeping also has its foibles. Confusing road markings or snowy road conditions can really throw it for a loop. But the problem is its entire existence serves to allow motorists to take a more passive role while driving. So what happens when it fails to function properly? In ideal circumstances, you endure a moderate scare before taking more direct command of your vehicle. But, in a worst case scenario, you just went off road or collided with an object at highway speeds.

Arizona, Suppliers Unite Against Uber Self-driving Program

Ever since last week’s fatal accident, in which an autonomous test vehicle from Uber struck a pedestrian in Tempe, Arizona, it seems like the whole world has united against the company. While the condemnation is not undeserved, there appears to be an emphasis on casting the blame in a singular direction to ensure nobody else gets caught up in the net of outrage. But it’s important to remember that, while Uber has routinely displayed a lack of interest in pursuing safety as a priority, all autonomous tech firms are being held to the same low standards imposed by both local and federal governments.

Last week, lidar supplier Velodyne said Uber’s failure was most likely on the software end as it defended the effectiveness of its hardware. Since then, Aptiv — the supplier for the Volvo XC90’s radar and camera — claimed Uber disabled the SUV’s standard crash avoidance systems to implement its own. This was followed up by Arizona Governor Doug Ducey issuing a suspension on all autonomous testing from Uber on Monday — one week after the incident and Uber’s self-imposed suspension.

Video of the Autonomous Uber Crash Raises Scary Questions, Important Lessons

On Wednesday evening, the Tempe Police Department released a video documenting the final moments before an Uber-owned autonomous test vehicle fatally struck a woman earlier this week. The public response has been varied. Many people agree with Tempe Police Chief Sylvia Moir that the accident was unavoidable, while others accusing Uber of vehicular homicide. The media take has been somewhat more nuanced.

One thing is very clear, however — the average person still does not understand how this technology works in the slightest. While the darkened video (provided below) does seem to support claims that the victim appeared suddenly, other claims — that it is enough to exonerate Uber — are mistaken. The victim, Elaine Herzberg, does indeed cross directly into the path of the oncoming Volvo XC90 and is visible for a fleeting moment before the strike, but the vehicle’s lidar system should have seen her well before that. Any claims to the contrary are irresponsible.

Unpacking the Autonomous Uber Fatality as Details Emerge [Updated]

Details are trickling in about the fatal incident in Tempe, Arizona, where an autonomous Uber collided with a pedestrian earlier this week. While a true assessment of the situation is ongoing, the city’s police department seems ready to absolve the company of any wrongdoing.

“The driver said it was like a flash, the person walked out in front of them,” explained Tempe police chief Sylvia Moir. “His first alert to the collision was the sound of the collision.”

This claim leaves us with more questions than answers. Research suggests autonomous driving aids lull people into complacency, dulling the senses and slowing reaction times. But most self-driving hardware, including Uber’s, uses lidar that can functionally see in pitch black conditions. Even if the driver could not see the woman crossing the street (there were streetlights), the vehicle should have picked her out clear as day.

Self-Driving Uber Vehicle Fatally Strikes Pedestrian, Company Halts Autonomous Testing

In the evening hours of March 18th, a pedestrian was fatally struck by a self-driving vehicle in Tempe, Arizona. While we all knew this was an inevitability, many expected the first casualty of progress to be later in the autonomous development timeline. The vehicle in question was owned by Uber Technologies and the company has admitted it was operating autonomously at the time of the incident.

The company has since halted all testing in the Pittsburgh, San Francisco, Toronto, and greater Phoenix areas.

If you’re wondering what happened, so is Uber. The U.S. National Transportation Safety Board (NTSB) has opened an investigation into the accident and is sending a team to Tempe. Uber says it is cooperating with authorities.

Automated Cars Are Not Able to Use the Automated Car Wash

We’ve been cautiously optimistic about the progress of autonomous driving. The miraculous technology is there, but implementing it effectively is an arduous task of the highest order. A prime example of this is how easy it is to “blind” a self-driving vehicle’s sensors.

TTAC’s staff has had its share of minor misadventures with semi-autonomous driving aids, be it during encounters with thick fog or heavy snow, but truly self-driving cars have even more sensitive equipment on board — and all of it needs to function properly.

That makes even the simple task of washing a self-driving car far more complicated than one might expect, as anything other than meticulous hand washing a big no-no. Automated car washes could potentially dislodge expensive sensors, scratch them up, or leave behind soap residue or water spots that would affect a camera’s ability to see.

Autonomous Features Are Making Everyone a Worse Driver

Autonomous vehicles are about as polarizing a subject as you could possibly bring up around a group of car enthusiasts. Plenty of gearheads get hot under the collar at the mere concept of a self-driving car. Meanwhile, automotive tech fetishists cannot wait to plant their — I’m assuming — khaki Chinos into the seat of an autonomous vehicle and enjoy a coffee without the hindrance of having to actually drive the thing to their destination.

I’ve previously discussed how autonomous cabs will become unparalleled filth-boxes, destined for salacious behavior. Because without driver oversight, why not sneeze into your hand and wipe it on the seat back? Now, surveys are beginning to indicate privately owned computer-controlled cars will be subject to similar activities — with some drivers suggesting they’ll have no qualms about having sex, drinking booze, or binge eating behind the wheel.

That’s the future we’re being promised, but a lot of autonomous features have already made it into modern production cars. Word is, they’re starting to make us terrible drivers. It’s enough to worry automakers to a point where they’re considering implementing an array of systems to more actively encourage driver involvement on a platform that’s designed to do the opposite.

Get ready to drive your self-driving car.

ICar or NoCar? Apple Car Project Hit With Layoffs, Future Uncertain

Apple’s self-driving car project seems to have reached the point in a TV show where new actors take on old roles and the script flies out the window.

According to the New York Times, the tech giant’s Project Titan has been hit with a slew of layoffs, leaving several areas of the project boarded up and in the dark.

Is the shadowy Apple car, once the dream of nerds everywhere, powering down?

Finally: Robotic Cars That Fire Guns (But Only in Iraq … For Now)

Mosul is the largest population center in northern Iraq, but it has been in ISIS hands since extremist militants overran the city of 2.5 million in June of 2014.

Taking the city back has proven a challenge, but the Iraqi Army, backed by Allied forces, plans to deploy a new tool to make it happen. It’s no longer than a Mazda MX-5, and not nearly as sexy, but Iraq thinks this four-wheeled robotic death car gives them a big advantage.

GM is Spending a Lot of Cash so You Don't Have to Drive

General Motors wants you to have more texting time in your car, and it’s dropping a lot of cash to see that it happens.

The company announced Friday that it will purchase San Francisco-based Cruise Automation in order to access and advance its self-driving vehicle technology, a buy worth upwards of $1 billion, Fortune reports.

The three-year-old startup has been busy gathering investor capital to develop and push aftermarket kits designed to turn regular vehicles into autonomous cars.

TTAC News Round-up: Honda Separates the Kids, Toyota Funks It Up, and the Costs Are Too Damn High at FCA

The CEO of Honda is pulling the car over and giving a stern lecture to the kids in the backseat.

That, a Scion gets a corporate makeover, Google goes in for autonomous feng shui, Fiat Chrysler Automobiles is drowning in modules and a famous British racetrack could get even Britisher … after the break!

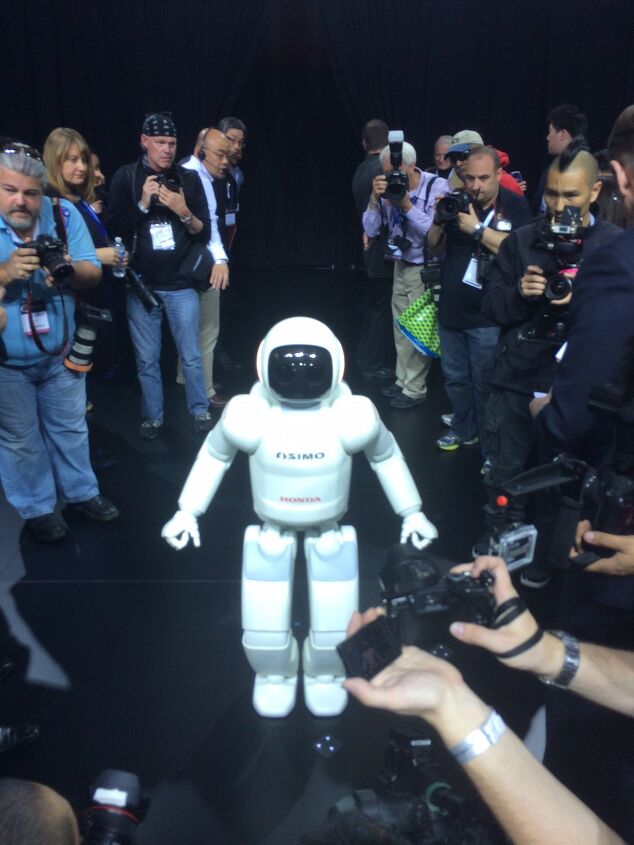

Honda's Next Innovation In Driverless Cars Has Two Legs, Not Four Wheels

Last week’s New York Auto Show saw Honda make a robot – and not a car – the centerpiece of its press conference. Even though it had a very important new product to introduce, Honda instead chose to have ASIMO do a song and dance number, and then promptly depart in the middle, due to (an admittedly adorable) case of “stage fright”.

![Unpacking the Autonomous Uber Fatality as Details Emerge [Updated]](https://cdn-fastly.thetruthaboutcars.com/media/2022/07/19/9193994/in-wake-of-crashes-public-confidence-in-self-driving-cars-pulls-a-u-turn.jpg?size=720x845&nocrop=1)

Recent Comments