Autonomous Vehicles, Malicious Drivers

You’ve probably heard the news: An autonomous Google-Lexus brushed a city bus at about 2 miles-per-hour. There’s been plenty of discussion on the incident, some of it oddly hysterical, but most of it has centered on the idea of the future capabilities of autonomous traffic to operate in traffic as it exists today. In other words, from everything I’ve read, the Google automotive team, and everybody else working on the autonomous-car problem, assumes that their cars will have to deal with the same conditions that you and I might face if we were behind the wheel of that automobile.

If that is truly the case, then autonomous vehicles are in for a rougher ride than anybody yet suspects, because they won’t have to deal with the same conditions that human drivers do. Their reality will be much, much worse.

Let me start with a confession of sorts: As a teenager, I read a considerable percentage of the nearly three hundred books that Isaac Asimov had written up to that point. Asimov was absurdly prolific in both pages and ideas, and his flexible imagination eventually made it possible for him to merge his two greatest series (the Foundation books and the Robot books) into a single, logically consistent universe. Future generations, however, will probably remember him best for his “Three Laws of Robotics.” I’ll reprint them here in case you spent your high school years having sex or going to parties instead of reading books:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

The difficulties faced by robots and humans in a world where the Three Laws were widely applied formed the basis for much of Asimov’s storytelling. (If you change the order of those laws, by the way, there are unintended consequences.) Asimov, of course, was not a computer scientist; such a profession barely existed during the bulk of his productive life. Those of us who have some mild pretense to the aforementioned title understand that there was a tremendous amount of what I’ll call “hand-waving” in the Asimov robot books about the workings of intelligence and consciousness. Just to get a robot to the point where it could think about the Three Laws would be a feat far beyond anything we’re likely to accomplish in any of our lifetimes.

The real hand-wave on Asimov’s part, however, had nothing to do with robot nature and everything to do with our own. He imagined that the future would be a sort of updated version of ’50s America, as suggested by Donald Fagen’s song I. G. Y., full of respectful children, intact families, and socially homogeneous groups. As a fervent optimist, Asimov couldn’t begin to envision the kind of human garbage roaming the streets of the United States or Europe in 2016, nor could he have ever conceived of a Western culture that would simply pack up and abandon the future to violent, moronic, and deeply superstitious successors simply because nobody in Finland or Massachusetts could be bothered to have children at anything approaching a replacement rate.

Any truly Three-Laws-compliant robot couldn’t be permitted to leave a house located anywhere more vibrant or crowded than my hometown of Powell, Ohio; it would end up unwittingly assisting thugs on their crime sprees, as in the movie “Chappie,” or it would find itself disassembled and sold for parts. The unfortunate truth is that the robots of the future will have to be permitted to do some harm to human beings just to preserve themselves. It’s not really a problem; you can assume that 20 years from now the “person” known as Google and all of its metallic avatars will have considerably more rights than you do. Such is already the case, as you’ll find out in a hurry should you decide to send your next paycheck to a bank account in the Cayman Islands, but the gap will widen considerably in the decades to come.

Autonomous vehicles will need their own implementation of the Three Laws. Since no Google car is projected to achieve consciousness in the near future, those laws will have to be interpreted as software. I suspect that the software will follow these principles:

- The Google car must obey the traffic laws unless obeying the traffic laws poses a hazard. As an example, if there is a stalled car ahead on a two-lane road, the Google car will have to have a set of conditions that allows it to pass on the double yellow, otherwise any stalled car would quickly accumulate a very long line of permanently halted Google cars behind it.

- The Google car must yield to aggressive traffic or traffic operating in an illegal fashion. In other words, if somebody speeds up next to a Google car in traffic then forcibly cuts in, the Google car will have to let it in rather than “defending its spot” the way a human driver might.

- The Google car must have a set of known responses for extraordinary environment behavior. Five years ago, I wrote a bit of fiction for this site about kids who use airbag inflators and custom-sewn fabric silhouettes to make cars “appear” suddenly in front of autonomous vehicles as a form of social revolt.

Solving for answers to 1 and 2 will be tough enough, but I want to really focus on 3 because that’s where autonomous vehicles will spend a surprising amount of their decision time. There is no way, I repeat, no way that human beings will treat autonomous vehicles with any courtesy or decency whatsoever. Perhaps in Isaac Asimov’s Squeaky Clean All White Middle Class America Of The Future, people might stop at a crosswalk and let an autonomous vehicle pass by; but in the Crapsack World of the foreseeable future, you can guarantee that at least the following things will happen:

- People will attempt to get hit by autonomous vehicles so they can collect a payday in court. Honestly, I’m surprised that nobody’s engineered a Google-car-on-pedestrian strike yet; the pockets involved are deeper than normal humans can understand and the jury would be hugely sympathetic to any person STRUCK DOWN BY A ROBOT CAR.

- People will rob autonomous trucks and cars that are carrying valuable goods. There’s virtually no risk. Just roll a disabled car in front of the Google truck and strip it clean before the cops arrive. Or do the same to an autonomous limousine and rob the passengers with anything from a Desert Eagle to a box cutter.

- Hyper-aggressive drivers will force autonomous vehicles off the road to have their spots. Just imagine the usual two-lane merge situation with one stopped lane and one empty lane. Why wouldn’t you just cruise up until you see a Google car then pull in? The Google car has to let you in. It can’t defend its position. There’s too much liability involved.

- An entire subset of human beings — let’s call them “teens”, since that’s both media code for “anybody committing a crime who isn’t a member of a country club” and it also refers to actual teenagers, who love chaos — will fuck with autonomous vehicles just because they can. They’ll throw rocks at them, drop things on them from overpasses, shoot at them, spray their sensors with paint, flatten their tires, and perform various other acts of mayhem just because they can. Just think of the hilarity involved in walking down the street and spraying the sensors of every autonomous car you see. Kids will do it because kids like to fuck stuff up and autonomous vehicles are likely to become objects of particular hatred for generations of kids who resent not being allowed to buy an old car and drive it anywhere they want without their helicopter parents or the nanny state soft-fencing them in.

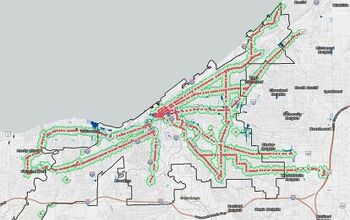

What’s going to happen to autonomous vehicles that travel through South Central Los Angeles or downtown Baltimore? Will their owners and operators simply accept that the “loss rate” for what amounts to a free ton of scrap metal is extremely high in those areas? Or will they place soft fences of their own around those high-loss areas, essentially denying transportation and freedom to anybody unlucky enough to be born in Hamsterdam instead of Fairfax County, VA? In states like New Mexico, where one in five drivers is an uninsured illegal immigrant, what’s it going to cost to insure your autonomous vehicle against hit-and-runs?

No battle plan, they say, survives the first contact with the enemy. And in this case, the enemy is us — the messy, chaotic, mentally ill, undocumented, angry, frustrated, overworked, underpaid, teeming masses of humanity. No sane person can think that autonomous cars can survive in that environment. It’s them or us in a fight to the death for control of the American road.

I’m not such a narcissistic egomaniac that I don’t realize that many, many intelligent people have pondered this question before today and likely come to conclusions that are better-informed but substantially similar to what I’ve described above. So you don’t have to worry about autonomous cars sharing the roads with human drivers and being subject to all of the hazards we’ve discussed. Rather, you can rest assured that our right to drive will simply disappear whenever it suits our West Coast tech elite. If we’re lucky, this unilateral takeover will only happen in places where population density and wealth make it easy, like San Francisco.

If we’re unlucky, however, the new order will simply be imposed upon us nationwide, the same way that Mr. Clinton imposed urban-focused gun control on rural towns where nobody’s committed a violent crime since before the Taft administration. If that day comes and the “Red Barchetta” scenario becomes law, you can rest assured that any power you have to vote or protest against the situation will have been thoroughly neutralized well ahead of time. You can, however, always pick up a rock.

More by Jack Baruth

Latest Car Reviews

Read moreLatest Product Reviews

Read moreRecent Comments

- Formula m For the gas versions I like the Honda CRV. Haven’t driven the hybrids yet.

- SCE to AUX All that lift makes for an easy rollover of your $70k truck.

- SCE to AUX My son cross-shopped the RAV4 and Model Y, then bought the Y. To their surprise, they hated the RAV4.

- SCE to AUX I'm already driving the cheap EV (19 Ioniq EV).$30k MSRP in late 2018, $23k after subsidy at lease (no tax hassle)$549/year insurance$40 in electricity to drive 1000 miles/month66k miles, no range lossAffordable 16" tiresVirtually no maintenance expensesHyundai (for example) has dramatically cut prices on their EVs, so you can get a 361-mile Ioniq 6 in the high 30s right now.But ask me if I'd go to the Subaru brand if one was affordable, and the answer is no.

- David Murilee Martin, These Toyota Vans were absolute garbage. As the labor even basic service cost 400% as much as servicing a VW Vanagon or American minivan. A skilled Toyota tech would take about 2.5 hours just to change the air cleaner. Also they also broke often, as they overheated and warped the engine and boiled the automatic transmission...

Comments

Join the conversation

Likely due to my naivete, I wasn't convinced of Jack's view that the tech 'elite' will soon dictate societal norms. But I just listened to this week's Smoking Tire podcast. Guest Dan Neil made two interesting observations. First, in a voluminous discussion of his role as a consultant for a sequel to Cannonball Run film, he repeatedly states that cars are an environmental, financial, and temporal (due to parking, maintenance, etc.) 'burden' to Millennials. This 'burden' explained both the lack of millennial purchasers and the new world of micro-leased uberized transport suitable to a low wage gig economy. He further indicates that his views are shared by the film's producers because the movie needs to be a hit. The story line will thus feature cooperation and renewable energy in a world where gas is outlawed. The second was his utter contempt and derision in referring to a visit to the University of Oklahoma recruiting center where - heaven forbid - the athletes have the gall to pray three times a day. Simply stated, it is clear Neil and his Hollywood supervisors firmly believe Millennials find personal freedom 'burdensome' because it requires concerted effort, costs money, and a focus on your own needs. They have no tolerance for anyone who fails to conform to their view. This terrifies me. With the tone set by Hollywood - and a general lack of interest in critical thought among Millenials - it won't be too long before voters start banning any action contrary to society's best interest to 'protect' others exploiting the vacuum left by the abrogation of societal norms. This is a long way of saying I won't take my kids to see the Cannonball Run remake or other movie with Neil as a consultant, but will willingly pay Mr. Roy to watch 32:07 (once released) and buy the Collectors Edition DVD.

So... did "The Good Wife" get this plot line from you? Great article, read it earlier and thought "that's fresh"; now watching telly tonight and there's a re-heated version on my screen...